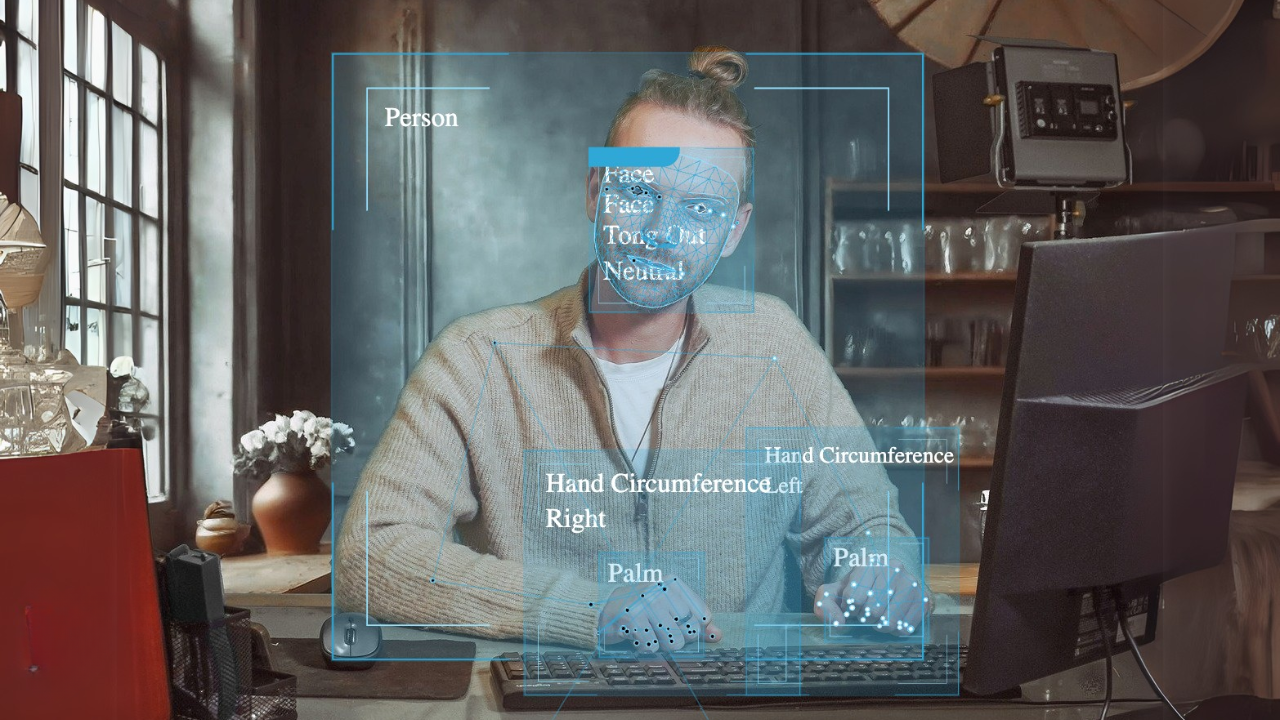

Person w/3D Face, Body, and Hands

3D keypoints for face, body, and hands—from images, video, and live streams

eyepop.person.3d-body-points.full:latest

...Run the full prompt in your EyePop.ai dashboard

Model type

Pre-trained Model

How It Works

Go deeper with 3D keypoint extraction. This model maps three-dimensional features of the human face, body, and hands—returning structured keypoint coordinates with confidence—so you can power detailed gesture recognition, sign language interpretation, and advanced VR/AR integrations.

Use it on images, recorded video, or live streams. No custom training required.

This model is optimized for:

- 3D keypoint outputs (x, y, z) for face, pose, and hands

- Multi-person scenes (where supported)

- Frame-by-frame results for video

- Cloud or On-Prem deployment

- Fast prototyping → production use

Why This Model Exists

Bounding boxes tell you where a person is. 2D keypoints tell you how they’re moving.

But some applications require a deeper layer of precision:

- Fine-grained hand articulation (fingers, pinches, grips)

- Face geometry signals (landmarks for expression + intent)

- Depth-aware pose (body position in 3D space)

- Stable tracking inputs for immersive or interactive systems

Most teams hit friction here because 3D keypoint pipelines are notoriously hard to stand up:

- Too many model formats and skeleton standards

- Large outputs that are painful to integrate and validate

- Inconsistent results across lighting, motion blur, occlusion, or camera angles

- Complexity that turns “cool demo” into “unshippable feature”

This model exists to remove that friction.

It provides a production-ready baseline for 3D face + body + hand keypoints so teams can focus on building experiences, analytics, and product logic not model wrangling.

Key Capabilities

Input Types

- Single images

- Video files

- RTSP / livestream feeds

- Webcam / IP camera streams

Output

- JSON with 3D keypoints (x, y, z)

- Confidence per keypoint

- Grouped by person + region (face / body / left hand / right hand)

- Frame-level results for video and streams

Deployment

- EyePop Cloud

- On-Premise AI Application Runtime

- Edge devices with GPU or CPU

Setup

- Create account

- Get API key

- Send media

- Receive structured 3D keypoints instantly

No training. No labeling. No model configuration.

Example Output

{

"keyPoints": [

{

"id": 34,

"points": [

{

"classId": 0,

"classLabel": "nose",

"confidence": 0.9491,

"id": 1,

"x": 234.161,

"y": 635.132,

"z": -1689.297

},

{

"classId": 1,

"classLabel": "left eye (inner)",

"confidence": 0.9426,

"id": 2,

"x": 260.307,

"y": 572.736,

"z": -1701.229

},

{

"classId": 2,

"classLabel": "left eye",

"confidence": 0.938,

"id": 3,

"x": 284.693,

"y": 567.8,

"z": -1700.895

},

{

"classId": 3,

"classLabel": "left eye (outer)",

"confidence": 0.9202,

"id": 4,

"x": 309.191,

"y": 563.186,

"z": -1701.186

},

{

"classId": 4,

"classLabel": "right eye (inner)",

"confidence": 0.9408,

"id": 5,

"x": 201.415,

"y": 579.59,

"z": -1704.338

},

{

"classId": 5,

"classLabel": "right eye",

"confidence": 0.9248,

"id": 6,

"x": 177.701,

"y": 579.957,

"z": -1704.576

},

{

"classId": 6,

"classLabel": "right eye (outer)",

"confidence": 0.9042,

"id": 7,

"x": 154.157,

"y": 580.182,

"z": -1704.742

},

{

"classId": 7,

"classLabel": "left ear",

"confidence": 0.9163,

"id": 8,

"x": 346.055,

"y": 564.255,

"z": -1444.702

},

{

"classId": 8,

"classLabel": "right ear",

"confidence": 0.905,

"id": 9,

"x": 134.999,

"y": 586.726,

"z": -1456.682

},

{

"classId": 9,

"classLabel": "mouth (left)",

"confidence": 0.7761,

"id": 10,

"x": 284.093,

"y": 669.511,

"z": -1557.855

},

{

"classId": 10,

"classLabel": "mouth (right)",

"confidence": 0.6729,

"id": 11,

"x": 205.256,

"y": 679.134,

"z": -1561.074

},

{

"classId": 11,

"classLabel": "left shoulder",

"confidence": 0.9718,

"id": 12,

"x": 403.004,

"y": 705.527,

"z": -1015.704

},

{

"classId": 12,

"classLabel": "right shoulder",

"confidence": 0.9674,

"id": 13,

"x": 162.64,

"y": 688.311,

"z": -1096.564

},

{

"classId": 13,

"classLabel": "left elbow",

"confidence": 0.9172,

"id": 14,

"x": 431.617,

"y": 884.688,

"z": -430.739

},

{

"classId": 14,

"classLabel": "right elbow",

"confidence": 0.9757,

"id": 15,

"x": 162.3,

"y": 800.236,

"z": -520.094

},

{

"classId": 15,

"classLabel": "left wrist",

"confidence": 0.8391,

"id": 16,

"x": 332.516,

"y": 963.84,

"z": -31.31

},

{

"classId": 16,

"classLabel": "right wrist",

"confidence": 0.9754,

"id": 17,

"x": 109.046,

"y": 679.181,

"z": 123.114

},

{

"classId": 17,

"classLabel": "left pinky",

"confidence": 0.7455,

"id": 18,

"x": 323.187,

"y": 1004.396,

"z": 43.841

},

{

"classId": 18,

"classLabel": "right pinky",

"confidence": 0.9598,

"id": 19,

"x": 97.278,

"y": 649.457,

"z": 195.701

},

{

"classId": 19,

"classLabel": "left index",

"confidence": 0.7072,

"id": 20,

"x": 302.3,

"y": 984.787,

"z": 8.918

},

{

"classId": 20,

"classLabel": "right index",

"confidence": 0.9442,

"id": 21,

"x": 75.983,

"y": 643.261,

"z": 174.003

},

{

"classId": 21,

"classLabel": "left thumb",

"confidence": 0.7428,

"id": 22,

"x": 299.241,

"y": 970.911,

"z": -29.879

},

{

"classId": 22,

"classLabel": "right thumb",

"confidence": 0.9626,

"id": 23,

"x": 93.032,

"y": 658.908,

"z": 134.589

},

{

"classId": 23,

"classLabel": "left hip",

"confidence": 0.9957,

"id": 24,

"x": 364.58,

"y": 1144.101,

"z": 20.53

},

{

"classId": 24,

"classLabel": "right hip",

"confidence": 0.9958,

"id": 25,

"x": 237.728,

"y": 1120.395,

"z": -20.034

},

{

"classId": 25,

"classLabel": "left knee",

"confidence": 0.8921,

"id": 26,

"x": 337.031,

"y": 1351.712,

"z": 492.682

},

{

"classId": 26,

"classLabel": "right knee",

"confidence": 0.9394,

"id": 27,

"x": 255.721,

"y": 1361.324,

"z": 435.646

},

{

"classId": 27,

"classLabel": "left ankle",

"confidence": 0.899,

"id": 28,

"x": 285.797,

"y": 1385.545,

"z": 1363.823

},

{

"classId": 28,

"classLabel": "right ankle",

"confidence": 0.8963,

"id": 29,

"x": 223.22,

"y": 1530.095,

"z": 1110.064

},

{

"classId": 29,

"classLabel": "left heel",

"confidence": 0.8834,

"id": 30,

"x": 283.148,

"y": 1397.849,

"z": 1462.036

},

{

"classId": 30,

"classLabel": "right heel",

"confidence": 0.8636,

"id": 31,

"x": 224.738,

"y": 1548.679,

"z": 1188.204

},

{

"classId": 31,

"classLabel": "left foot index",

"confidence": 0.7937,

"id": 32,

"x": 234.789,

"y": 1440.039,

"z": 1504.145

},

{

"classId": 32,

"classLabel": "right foot index",

"confidence": 0.6736,

"id": 33,

"x": 235.119,

"y": 1653.121,

"z": 1134.64

}

]

}

],

"seconds": 0,

"source_height": 1882,

"source_id": "578c8f4c-18c6-11f1-b631-8e1aed86f95b",

"source_width": 1094,

"system_timestamp": 1772737558305993000,

"timestamp": 0

}(Swap this schema to match your exact landmark set: Face mesh count, pose skeleton, and hand joint naming.)

Practical Use Cases

Gesture Recognition & Interaction

- Fine gesture inputs (pinch, point, grab, swipe)

- Hand motion + intent cues for UI control

- Touchless kiosk / interface interactions

Sign Language & Communication Interfaces

- Hand-shape + motion tracking inputs

- Landmark sequences for downstream interpretation models

- Accessibility tooling prototypes

VR/AR & Spatial Experiences

- 3D pose anchors for avatar control

- Face + hand rigging inputs

- Spatial interaction mapping for immersive apps

Animation & Character Systems

- Body + hand pose capture inputs

- Expression and facial landmark signals

- Retargeting pipelines (with your rigging layer)

Research & Analytics

- Detailed movement analysis

- Micro-motions in hands + face

- Behavior and interaction pattern studies (with appropriate consent/workflows)

Why 3D Keypoints Matter

3D keypoints give you depth-aware structure.

That unlocks:

- More reliable pose interpretation across angle changes

- Better tracking inputs for immersive systems

- Fine motor detail for hands and facial landmarks

- Cleaner downstream features for recognition and interaction logic

If you’re building anything “interactive” or “immersive,” 3D keypoints are often the difference between a novelty demo and a usable feature.

Deployment Options

EyePop Cloud

- Scalable

- Managed infrastructure

- Best for web apps + fast iteration

On-Premise Runtime

- Keep video inside your network

- Lower latency options

- Works with GPU or CPU environments

- Ideal for sensitive footage or regulated contexts

Who This Is For

- Developers building VR/AR or interactive camera experiences

- Teams working on gesture or sign-language interfaces

- Product teams needing structured 3D keypoint outputs without ML staffing

- Anyone who needs face + body + hands mapped in 3D—fast

Get early access

Want to move faster with visual automation? Request early access to Abilities and get notified as new vision capabilities roll out.